What Is Edge Deployment? Running rPPG on Embedded Hardware

What edge deployment rPPG embedded hardware means for OEMs, from latency and privacy to quantization, thermals, and camera-specific model design.

Edge deployment rPPG embedded hardware has moved from a research curiosity to a product architecture question. Hardware OEMs, automotive Tier-1 suppliers, and IoT device makers are no longer asking whether remote photoplethysmography can work in principle. They are asking whether it can run on the camera, processor, and thermal envelope they already plan to ship. That is a very different conversation, and usually a more expensive one.

“The real challenge is not just estimating heart rate from video. It is doing so with low latency, low power, and limited memory on commodity edge platforms.” That is the core message of RhythmEdge by Zahid Hasan, Emon Dey, Sreenivasan Ramasamy Ramamurthy, Nirmalya Roy, and Archan Misra (SMARTCOMP 2022), which profiled rPPG on Jetson Nano, Coral Dev Board, and Raspberry Pi.

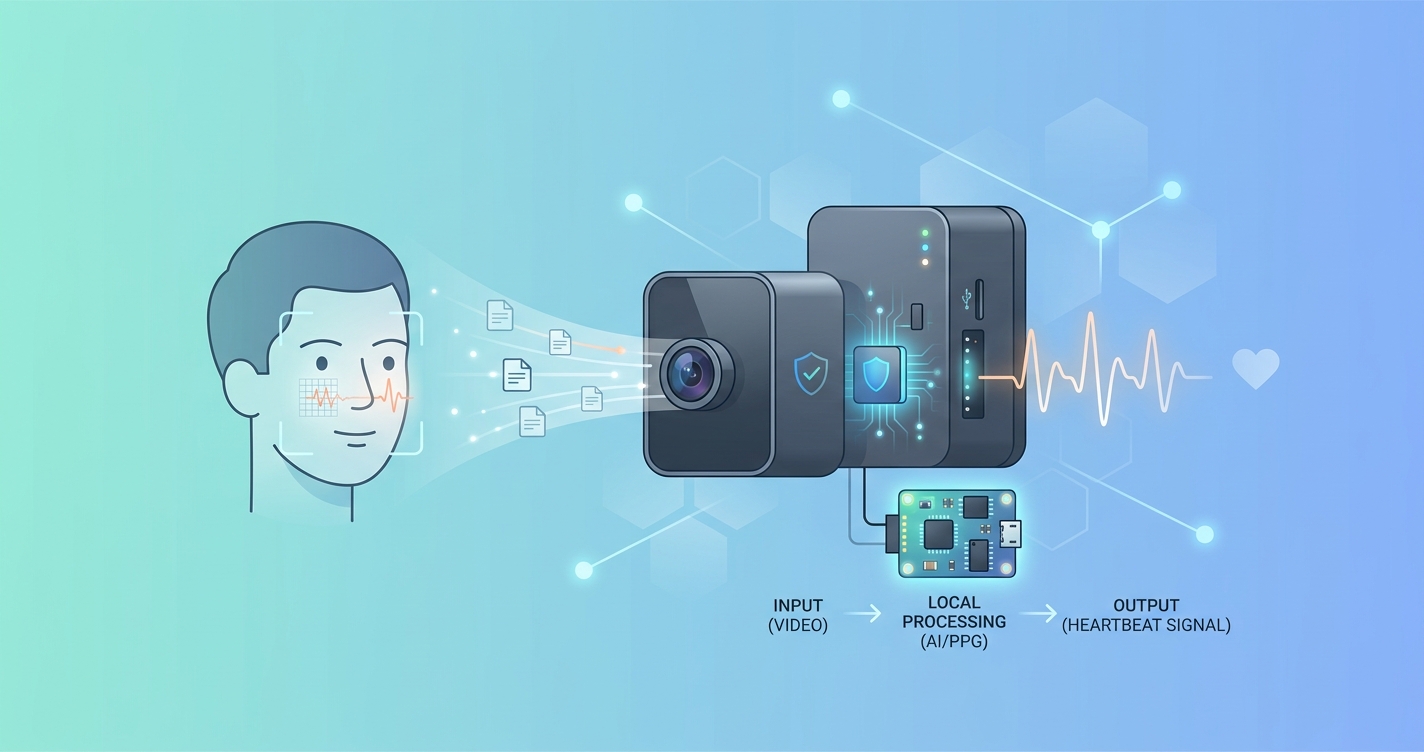

Edge deployment rPPG embedded hardware means inference lives near the sensor

In plain language, edge deployment means the vital-sign pipeline runs on or near the camera instead of shipping raw video to a distant server for most of the work. For rPPG, that usually includes frame capture, face or ROI tracking, temporal buffering, signal-quality checks, and at least part of the physiological inference stack.

That matters because rPPG is not a single forward pass through a model. It is a time-series problem wrapped inside a camera system. The stack has to live with:

- Fixed frame-rate limits

- ISP behavior that can distort subtle pixel changes

- Memory pressure from temporal windows

- Thermal ceilings on embedded SoCs

- Connectivity conditions that may be unreliable or expensive

A cloud demo can hide some of that. A production device cannot.

Why embedded teams push rPPG to the edge

The business case for local execution is usually less dramatic than the marketing copy suggests. It comes down to system constraints.

| Decision factor | Cloud-heavy architecture | Edge-first architecture |

|---|---|---|

| Video transport | Continuous upstream dependency | More processing stays local |

| Latency | Includes network round trips | Mostly bounded by device compute |

| Privacy posture | Wider movement of raw frames | Smaller raw-video footprint |

| Offline tolerance | Weak when connectivity drops | Better continuity during outages |

| Device tuning | Generic deployment assumptions | Camera- and ISP-specific tuning |

| Fleet operations | Easier centralized updates | Harder per-device optimization |

For an automotive program, local inference reduces dependence on network availability inside the vehicle. For a kiosk or smart display, it can simplify privacy reviews because fewer raw frames leave the box. For battery-aware IoT hardware, the tradeoff becomes more subtle: streaming video burns radio power, but local inference burns compute and heat. Either way, the architecture has to be matched to the hardware.

The embedded bottleneck is usually the whole pipeline, not the model alone

One reason edge projects slip is that teams underestimate the non-model work. Xin Liu, Brian Hill, Ziheng Jiang, Shwetak Patel, and Daniel McDuff argued in EfficientPhys (ECCV 2022) that preprocessing overhead is a serious part of deployment cost. That observation lands hard in embedded systems, where face detection, ROI management, exposure handling, and temporal buffering can be as consequential as the neural network itself.

A lightweight model still fails if the surrounding pipeline is heavy. In practice, embedded rPPG programs often need to budget for:

- Sensor capture and synchronization

- Facial ROI extraction or tracking

- Motion handling

- Sliding-window storage for temporal inference

- Quantized inference runtime

- Result smoothing and confidence logic

- Device APIs, logging, and watchdog behavior

I keep coming back to this because it changes procurement decisions. A team buying “an rPPG model” is usually buying a full device-side signal pipeline whether they realize it or not.

Industry applications where edge deployment matters

Automotive and in-cabin monitoring

Automotive teams care about determinism. A pulse-estimation pipeline that depends on remote connectivity is hard to defend in a cabin where lighting shifts, motion artifacts, and concurrent safety workloads already compete for compute. Embedded deployment lets rPPG share a local SoC with other sensing functions, though that introduces strict power and latency budgets.

Clinical kiosks and point-of-care terminals

Clinical and retail kiosks often sit behind conservative IT policies. In those settings, it is easier to justify a device that processes face video locally and sends compact outputs upstream than one that streams raw video across the network. This is also where camera-specific tuning starts to matter; a weak CPU or aggressive ISP can erase useful signal before inference begins.

Smart displays, wearables, and IoT enclosures

For IoT device makers, BOM and thermal design usually decide the architecture. If local inference requires a more expensive compute module, a larger enclosure, or active cooling, the deployment equation changes fast. Edge deployment only makes sense when the pipeline is compact enough to coexist with the rest of the product.

Current research and evidence

The research base now says fairly clearly that edge deployment is feasible, but only with careful optimization.

Hasan, Dey, Ramamurthy, Roy, and Misra reported in RhythmEdge (2022) that contactless heart-rate estimation could run on Jetson Nano, Coral Dev Board, and Raspberry Pi with a maximum power draw around 8 watts, memory use near 290 MB, and latency as low as 0.0625 seconds in their profiled setup. Those are not smartphone-class numbers, but they are realistic enough to show that embedded rPPG is no longer theoretical.

Changchen Zhao, Pengcheng Cao, Shoushuai Xu, Zhengguo Li, and Yuanjing Feng took a complementary approach in Pruning rPPG Networks: Toward Small Dense Network With Limited Number of Training Samples (CVPR Workshops 2022). Their work focused on pruning small rPPG models without destroying signal quality, which matters for OEMs trying to fit inference into tighter FLOP and memory budgets.

The broader field review also helps explain why this remains a hardware-specific problem. In Remote Photoplethysmography: A Review published in Sensors in 2022, the authors summarized how motion, ambient light variation, frame-rate constraints, and camera characteristics all shape performance. That is exactly why embedded deployment cannot be treated as a last-mile packaging step.

There is also a compression problem. Research on compression-aware deep-learning rPPG has shown that video encoding choices can degrade the signal before inference. For embedded programs, that matters because edge architectures often mix local processing with compressed storage or network backhaul. If the signal dies in the codec, the model never gets a fair shot.

What engineering teams compare before committing to edge deployment

Most buyers compare a few architecture patterns before a program is approved.

| Architecture pattern | What runs on-device | Common fit | Main engineering risk |

|---|---|---|---|

| Capture-only | Camera and upload logic | Fast pilots | Network dependency |

| Hybrid edge | Capture, ROI extraction, quality scoring, partial inference | Commercial pilots | Split-stack complexity |

| Full edge inference | Capture through vitals estimation | Automotive, kiosks, secure sites | Thermals, memory, compute ceilings |

| Edge plus centralized retraining | Local live inference, remote model lifecycle | Standardized fleets | Version drift across hardware |

A few patterns show up again and again:

- If connectivity is inconsistent, more of the pipeline moves local

- If privacy review is strict, raw-video movement gets minimized

- If the device has a customized camera and ISP, model tuning becomes mandatory

- If the thermal envelope is tiny, pruning and quantization stop being optional

That last point is worth stating plainly. Edge deployment is usually a model-optimization problem only at the start. By the time a product is ready to ship, it becomes a hardware-software co-design problem.

The future of edge deployment for rPPG embedded hardware

The next phase looks less like “one generic rPPG model everywhere” and more like device-specific builds. I would expect three shifts.

First, more camera-specific training. Teams are already finding that general models degrade when sensor characteristics, frame timing, or ISP behavior change. That is why posts like why one-size-fits-all rPPG models fail keep resonating with technical buyers.

Second, more hybrid deployments. Even when cloud analytics remain useful, buyers increasingly want signal extraction and quality checks near the sensor. That split keeps the time-sensitive work local while leaving fleet management and model updates centralized.

Third, more low-light and NIR optimization for embedded programs in fixed environments. That is especially relevant for buyers evaluating how to optimize rPPG for low-light and NIR conditions, where illumination design and camera choice heavily influence whether edge inference is practical.

Frequently Asked Questions

What is edge deployment in rPPG?

It means running some or all of the camera-based vital-sign pipeline on local embedded compute rather than sending raw video to a remote server for full analysis.

Why do OEMs prefer edge deployment for embedded hardware?

Usually because it can reduce latency, improve resilience when connectivity is weak, and limit the amount of raw face video moving across networks.

Is pruning enough to make rPPG work on embedded devices?

Not by itself. Pruning helps, but teams still have to manage capture, ROI tracking, buffering, ISP effects, and runtime integration on the target hardware.

Does edge deployment remove the need for cloud infrastructure?

No. Many programs still use cloud systems for monitoring, device management, analytics, and model updates. The main difference is that the time-sensitive inference path lives closer to the sensor.

Edge deployment is really a product decision disguised as an AI decision. If your team is evaluating camera-specific rPPG on a kiosk, vehicle, smart display, or custom IoT device, Circadify is building for that exact problem space. Learn more about a custom embedded deployment at circadify.com/custom-builds.